Beyond Hyperanthropomorphism

Or, why fears of AI are not even wrong, and how to make them real

This research note is part of the Mediocre Computing series

One of the things that makes me an outlier in today’s technology scene is that I cannot even begin to understand or empathize with mindsets capable of being scared of AI and robots in a special way. I sincerely believe their fears are strictly nonsensical in a philosophical sense, in the same sense that I consider fear of ghosts or going to hell in an “afterlife” to be strictly nonsensical. Since I often state this position (and not always politely, I’m afraid) and walk away from many AI conversations, I decided to document at least a skeleton version of my argument. One that grants the AI-fear view the most generous and substantial interpretation I can manage.

Let me state upfront that I share in normal sorts of fears about AI-based technologies that apply to all kinds of technologies. Bridges can collapse, nuclear weapons can end the world, and chemical pollution can destabilize ecosystems. In that category of fears, we can include: killer robots and drones can kill more efficiently than guns and Hellfire missiles, disembodied AIs might misdiagnose X-rays, over-the-air autopilot updates might brick entire fleets of Teslas causing big pile-ups, and trading algorithms might cause billions in losses through poorly judged trades. Criticisms of the effects of existing social media platforms that rely on AI algorithms fall within these boundaries as well.

These are normal engineering risks that are addressable through normal sorts of engineering risk-management. Hard problems but not categorically novel.

I am talking about “special” fears of AI and robots. In particular, ones that arise from our tendency to indulge in what I will call hyperanthropomorphic projection, which I define as attributing to AI technologies “super” versions of traits that we perceive in ourselves in poorly theorized, often ill-posed ways. These include:

“Sentience”

“Consciousness”

“Intentionality”

“Self-awareness”

“General intelligence”

I will call the terms in this list pseudo-traits. I’m calling them that, and putting scare quotes around all of them, because I think they are all, without exception, examples of what the philosopher Gilbert Ryle referred to as “philosophical nonsense.” It’s not that they don’t point to (or at least gesture at) real phenomenology, but that they do so in a way that is so ill-posed and not-even-wrong that anything you might say about that phenomenology using those terms is essentially nonsensical. But this can be hard to see because sentences and arguments written using these terms can be read coherently. Linguistic intelligibility does not imply meaningfulness (sentences like “colorless green ideas sleeping furiously” or “water is triangular” proposed by Chomsky/Pinker are examples of intelligible philosophical nonsense).

To think about AI, I myself use a super-pseudo-trait mental model, which I described briefly in Superhistory, not Superintelligence. But my mental model rests on a non-anthropomorphic pseudo-trait: time, and doesn’t lead to any categorically unusual fears or call for categorically novel engineering risk-management behaviors. I will grant that my model might also be philosophical nonsense, but if so, it is a different variety of it, with its nonsensical aspects rooted in our poor understanding of time.

Note that it is possible to use the listed pseudo-trait terms carefully in non-nonsensical ways, which is what philosophers often try to do. For example, the philosopher Ned Block manages to talk about consciousness in what I find to be genuinely useful ways. Michael Bratman talks of intentionality in similarly non-nonsensical ways. Such usages are like alchemists managing to do real chemistry with inadequate terminology. But to the extent these usages are non-nonsensical, the philosophers who use them invariably work with more limited senses of the terms than is typically used by informal AI-fear discourses.

In this essay, I’m going to mainly talk about these pseudo-traits as they’re talked about in informal, philosophical-nonsense AI discourses, which is about 90% of the popular discourse around AI.

1. Nonsense Maps of Real Territories

To the extent it is possible to use a loaded, usually-pejorative term like “nonsense” in a technical and neutral way, that is the way I intend it. As a descriptive adjective relative to a certain standard of rigor in philosophical argumentation (which you may or may not accept), not as a slur.

Trying to navigate a complex question using philosophical nonsense descriptions is like trying to navigate the oceans using the semi-mythological old maps with “here be dragons” marked in various places — which means you might carry with you various anti-dragon mystical weaponry, magic spell books, “dragon alignment” incantations, and so on. Since your actual risks involve (say) storms and sharks, these preparations for oceanic voyaging are not even wrong.

If your preparations do turn out to be appropriate (spears intended for mythical dragons can be used against real sharks, but any spells you’ve cast on the spear are irrelevant), it is by accident. But outside of such accidental utility (a Gettier counterexample type issue), the terms of understanding are philosophical nonsense, and therefore the maps do not describe the territory in sufficiently usable ways to be considered “truthful.” Imperatives suggested by them cannot be considered important or urgent, if they can be acted upon at all (if I tell you “we must study and classify dragons!” where would you start?).

The situation with AIs is not quite as bad mythical dragons though.

Unlike say Daniel Dennett, I don’t think the phenomenology itself is non-existent, or an artifact of some sort of confusion that might go away if we develop better understandings of reality. There’s a there there that the pseudo-trait terms gesture at. Our current language (and implied ontology) is merely inadequate to the point of uselessness as a means of apprehension.

If and when we ever understand the phenomenology via more usable constructs, we might replace pseudo-trait terminology and philosophical nonsense with trait terminology and usable models. This is similar to how we might think about dark matter and dark energy today. There’s probably real phenomenology there, but those particular pseudo-constructs are no more than gestures at parts of equations that seem to work but we don’t fully understand.

So that’s our starting point: the pseudo-traits of AI-fear discourse are philosophical nonsense, but we’ll grant they might be gesturing at real things. Let’s build from there.

2. SIILTBness

I need to introduce a second borrowed term of art here, the idea of there being something it is like to be an entity, which I will shorten to SIILTBness.

Philosophers of mind use that particular phrase to point to a feature of at least some sufficiently complex living things. Let’s arbitrarily set the complexity boundary at salamanders. The specific location of the boundary on the complexity scale does not matter, merely the assumption that such a fuzzy boundary exists.

So there is something it is like to be a salamander. There is something it is like to be a bat (the original example1 used by Thomas Nagel who introduced this approach). There is something it is like to be a chimpanzee. There is something it is like to be a human. My idiosyncratic definition of something it is like to be-ness is:

It is what commonly deployed pseudo-trait terms collectively try to point to

It entails the existence of a coherent quality of experience associated with an entity

I’m going to assume, without justification or argument, that we can attribute SIILTBness to all living animals above the salamander complexity point, but I won’t attempt to characterize it (some do, for example, in terms of mirror-recognition tests and so forth, but it is not important for my argument to add such details).

As a concept carefully constructed and used by philosophers, SIILTBness is generally talked about in non-philosophical-nonsense ways, though of course, it too has nonsense potential. So it is a useful term to use instead of the pseudo-traits often used to point to it (or aspects of it).

The second condition says that when there is something it is like to be be a thing, there is also something it is like to experience reality as that thing.

This is where the subtlety of Nagel’s formulation kicks in. We are not talking about what it might be like for you to be a bat. We are talking about what it is like for a bat to be a bat. Or if you want the harder version, one that includes what is known as the indexicality problem, what it is like for a specific bat to be itself. So body switching, or John-Malkoviching, even within a single species, is not what we’re talking about.

But let’s stick to the somewhat easier version, and only consider an equivalence class of entities that might have interchangeable SIILTBness, like simple organisms. Perhaps if you body-switched two very young genetic-twin bats that were sleeping hanging next to each other in the same dark cave, they wouldn’t even notice when they woke up. At best, they'd feel like they'd been moved a few inches over by a mischief-maker in the night. This koan-like outcome would not be very illuminating philosophically, since it would mean you can’t actually use SIILTBness as a discriminant for anything.2

Now the thing about SIILTBness is that even where we are confident that an entity possesses SIILTBness, we can only experience the associated quality of experience in extremely weak, superficial, non-indexically-specific ways. For example, consider this spectrum of possibilities in the range between body-switching to SIILTB-experience:

You can dress as a different gender and go out in public and experience something of how the other gender experiences the world

You could disguise yourself very accurately as a famous celebrity using prosthetic masks, and mildly experience what it’s like to be them

You could stand on top of a ladder, and put on a special vision prosthetic that folds your lines of sight 90 deg, and shifts them a foot to each side, to experience what it is like to “see” like an elephant

You could wear a helmet that can do sonar, and translates what it senses into scalp pressure, and get a slight feel for what it is like to be a bat

You can take hormone injections and experience what it is like to have a different personality, like say an angrier one

The technology for this kind of what might be called live-experience transpiling (trans-compiling) is of course improving all the time. The big constraint is actually not sensory realism but memory realism. Since your experience of being you is an ongoing integration of everything you have previously experienced (memory) and things you are experiencing now (sensory experience), unless we invent of some sort of designer amnesia and memory-transplant technology, there is no good way to complete the something-it-is-like-to-be transpilation to another entity.

But let’s grant that that could happen. There’s nothing fundamentally mysterious about that speculation. Deep-immersive sensory-neurochemical prosthetic realism plus memory wipes and transplantation could perhaps equal near-perfect fidelity SIILTB transpilation.

This gets us closer to the real problem. The SIILTBness of two entities that might be involved in a transiplation have to match in some sense. The recipient of a SIILTB transpilation has to have a brain that is at least as complex in its capacity for experience as the source. You can perhaps approximately experience what it is like to be a salamander, but a salamander cannot ever experience what it is like to be you, because its brain is simpler.

And this is not about peripheral difference in the construction of the embodiment (like kind of vision, number of limbs, special morphology like wings). It is about computational capacity, or some superset of computational phenomenology.

In the spirit of looking for the most generous view of AI fears, let’s disregard the second (and in my opinion more likely) possibility, and stipulate that whatever SIILTBness is, it can be embodied by computation as we understand it today. For those of you familiar with the various schools of thought here, this is me accepting Strong AI as a starting point for this argument, even though I don’t actually buy the Strong AI position philosophically.

Let’s see if we can get to well-posed hyperanthropomorphic projections, and on to well-posed uniquely-about-AI fears, even from Strong AI starting points.

3. The Salamander of Theseus

Now, obviously, the only reason we are even tempted to talk about AI and robots in qualitatively and categorically different terms than bridges, nuclear bombs, or toxic chemicals, is that they possess something that we consider roughly equivalent to our own brains.

To a first approximation, computer and robot brains can be assumed to be as general, and as powerful, as our own, and therefore, via a generous assumption, capable of SIILTBness. This would mean all the pseudo-traits point to something potentially real, and that we can meaningfully talk about super versions of things.

If computers are not already there, perhaps they soon will. To the extent there are detail-level differences, such as brain neurons having vastly more connections than deep learning networks, it is not unreasonable to assume that they’re still close enough to map to each other in at least lossy ways.

In other words, to the extent the computer is like the brain, there should be something it is like to be a computer, and we should be able to experience at least some impoverished version of that, and going the other way, there should be something it is like for a computer to experience being like a human (or superhuman).

Even if they are too simple, and Moore’s Law will prevent them from ever reaching human scale, there should at least be something it is like for a much simpler being, which we are confident possesses SIILTBness, to experience what it is like to be a computer and vice versa.

Ie, at the very least, it should be possible for a salamander to experience what it is like for a computer to be a computer, and vice versa. I can buy into this very limited expectation starting with Strong AI premises.

One way to get there is via a thought experiment that I will call the Salamander of Thesus. It combines the Ship of Theseus thought experiment, and a kind of neuronal-substitution thought experiment first popularized by Dennett and Hofstadter in their classic, The Mind’s I.

Imagine a salamander’s brain neurons being slowly, microscopically, replaced by functionally equivalent silicon-based circuitry, perhaps running simple convolution computations of the sort modern CNNs do. The replacement process is designed to progress through to a completely silicon-based salamander brain, so that living biological salamander S eventually becomes robot salamander A.

Let us stipulate that we can also construct such a silicon brain modeled on a salamander brain all at once (rather than by substitution), to construct a robot salamander B as a reference entity. We don’t know whether or not there is something it is like to be robot salamander B, but it is behaviorally sufficiently similar to robot salamander A, created by our substitution process, for the question to be well-posed.

Now what are we actually doing here?

Killing biological salamander S slowly, creating 2 philosophical-zombie robot salamanders, A and B

Creating a robot salamander A with SIILTBness, and a p-zombie twin, B

Allowing salamander S to fully experience the SIILTbness of a non-p-zombie B

Allowing S to experience SIILTBness of A, which emerges during the replacement process, while B remains a zombie

None of these options is particularly satisfactory, but none of them is also obviously and intuitively true, let alone provably so. Option 4, incidentally, is the Silicon Frankenstein option — the idea that something mysterious happens somewhere along the way that lets SIILTBness emerge in silico via Ship-of-Theseus entanglement with a thing that presumptively possesses transferable SIILTBness.

To the extent we can model salamander-brain functions well enough (and here we are talking micro-functions, like convolution for edge detection for visual processing), the functional reductiveness itself does not seem to be a philosophical show-stopper.

While we can’t be sure some mysterious ontological essences are not lost (or gained) in the porting of functions from neurons to silicon, the functional reductiveness doesn’t seem to imply effects that would clearly break ontological continuity in the transformation. Note that we are not trying to make a perfect robot salamander. We’ll be happy with a messed-up, mentally disabled robot salamander. All we are looking for is grounds to accept that SIILTBness of some sort might exist in A.

We are trying to get the salamander to experience what it is like to be the computer (or vice versa) by replacing it piecemeal, Ship of Theseus style. To argue for a vitalist essentialism is to argue that at some point, salamander S must lose SIILTBness, or the emerging robot salamander A must gain it, due to some unknown effect that is missing in the construction process of robot salamander B, but categorically decoupled from functional characteristics of the physical brains involved (biological or silicon).

I can’t think of good reasons for why there must be some such gain or loss, but also can’t think of good reasons why there cannot be. So I am open to the possibility that computer brains can have SIILTBness.

The point of using salamanders in this thought experiment is that SIILTBness has little to nothing to do with the level of “intelligence” or other pseudo-trait under consideration. To the extent the pseudo-trait of “intelligence” is involved in SIILTBness at all, it doesn’t matter whether it is “super” or “general” in any sense. We’re not even talking about super-salamanders yet, let alone super-humans or “AGIs”.

Actually existing salamander brains are neither super, nor general in any interesting way. Sure, they might be (finite memory) Turing equivalent at a Turing-tarpit level, but they are not practically general in the cartoon AGI-fears sense.

But this does not matter for SIILTbness.

There might be something it is like to be a computer or robot at salamander levels of capability at least, or there might not. But it’s a well-posed possibility, at least if you start with Strong AI assumptions. My fellow AI-fear skeptics might feel I’m ceding way too much territory at this point, but yes, I want to grant the possibility of SIILTBness here, just to see if we get anywhere interesting.

But you know what does not, even at this thought-experiment level, possess SIILTBness? The AIs we are currently building and extrapolating, via lurid philosophical nonsense, into bogeymen that represent categorically unique risks. And the gap has nothing to do with enough transistors or computational power.

So let’s finally talk about the basis for AI-fearmongering: hyperanthropomorphic projection.

4. Well-Posed Hyperanthropomorphism

Again, I want to start from as generous a starting point as I’m capable of entertaining. I won’t dismiss the possibility of hyperanthropomorphic projection being meaningful out of hand. But I’ll posit what I think are reasonable necessary conditions for it to be well-posed.

In my opinion, there are two necessary conditions for hyperanthropomorphic projection to point to something coherent and well-posed associated with an AI or robot (which is a precondition for that something to potentially become something worth fearing in a unique way that doesn’t apply to other technologies):

SIILTBness: There is something it is like to be it

Hyperness: The associated quality of experience is an enhanced entanglement with reality relative to our own on one or more pseudo-trait dimensions

Almost all discussion and debate focuses on the second condition: various sorts of “super”-ness.

This is most often attached to the pseudo-trait dimension of “intelligence,” but occasionally to others like “intentionality” and “sentience” as well. Super-intentionality for example, might be construed as the holding of goals or objectives too complex to be scrutable to us.

“Sentience” is normally constructed as a 0-1 pseudo-trait that you either have or not, but could be conceptualized in a 0-1-infinity way, to talk about godly levels of evolved consciousness for example. A rock might have Zen Buddha-nature but is not sentient, so it is a 0. You and I are sentient at level 1. GPT-3 is 0.8 but might get to 1 with more GPUs, and thence to 100. At a super-sentience level of 1008 we would grant it god-status. I think this is all philosophical nonsense, but you can write and read sentences like this, and convince yourself you’re “thinking.”

Each of these imagined hyper-traits is a sort of up-sampling of the experience of our own minds based on isolated point features where such up-sampling is legible. The argument goes something like this: You can think 2 moves ahead, and that’s part of “intelligence,” and there is something it is like to think 30 moves ahead, and that’s part of “super-intelligence.”

As a result, you get arguments that effectively try to bootstrap general existence proofs about nebulous pseudo-traits from point-feature trajectories of presumptive components of it. So AlphaGoZero becomes “proof” of the conceptual coherence of “super-intelligence,” because the “moves ahead” legible metric makes sense in the context of the game of Go (notably, a closed-world game without an in-game map-territory distinction). This sort of unconscious slippage into invalid generalizations is typical of philosophical arguments that rest on philosophical nonsense concepts.

If it’s not clear why, consider this: a bus schedule printed on paper arguably thinks 1000 moves ahead (before the moves start to repeat), and if you can’t memorize the whole thing, it is “smarter” than you.

I’m not trying to be cute here.

The point is, trying to infer the properties of general pseudo-trait extrapolations (if you begin by generously granting that the pseudo-trait might point to something real, even if only in a nonsensical way) through trends in legible variables like “moves ahead” or “number of parameters in parameter space” is an invalid thing to do.

It’s like trying to prove ghosts exist by pointing to the range of material substantiveness that exists between solid objects and clouds of vapor, so you get to an “ectoplasm” hypothesis, which is then deployed as circumstantial “evidence” in favor of ghosts.

So how does this go wrong? It goes wrong by producing what I call rhyming philosophical nonsense.

5. Rhyming Philosophical Nonsense

If you and I are merely “intelligent,” “intentional,” and “capable,” hyperanthropomorphic projection imagines entities that are “superintelligent” and capable of holding “superintentions” that are somewhere between indifferent to hostile to our own, and pursuing them with “supercapability” that we cannot compete with. And so we end up with the problem statement that we have to “align” its “intentions” with our own.

This is dragon-hunting with magic spells based on extrapolating the existence of clouds into the existence of ectoplasm. We’re using two rhyming kinds of philosophical nonsense (one that might plausibly point to something real in our experience of ourselves, and the other something imputed, via extrapolation, to a technological system) to create a theater of fictive agency around made-up problems.

Note that we are not talking about ordinary engineering alignment. If I align the wheels and steering of my car, my “intention” (steering angle) is “aligned” with that of the wheels (turn angle). If I set my home thermostat to a comfortable 68F, I am “aligning” the intentions of that very simple intelligence to my own. Done. Alignment problem solved.

For a steering mechanism to drive wheels, or for a simple PID controller to track a set-point, is a kind of intentionality that is simple enough to not be philosophical nonsense. These are maps that coherently talk about associated territories in useful ways. But clearly this sort of thing is not what the AI scaremongering is about.

The problem arises because of a sort of motte-and-bailey maneuver where people use the same vague terms (like “intention”) to talk about thermostats, paperclip maximizers, and inscrutable god intelligences that are perhaps optimizing some 9321189-dimensional complex utility function that is beyond our puny brains to appreciate.

Other pseudo-traits suffer from the same problem. The big one, “intelligence” certainly does. A trivial chip can do basic arithmetic better than us and salamanders, and you can call that “simple intelligence” if you like, but you have to actually establish that “general” intelligence is a coherent analogue of that, and that as you turn some sort of “generality” knob, there’s a magic threshold past which the thing is general and capable of callously killing you in a very special way.

Let me set aside my belief that optimization theory (and its generalizations like satisfiability theory and preference-ordering theory) is itself a philosophically nonsensical frame3 for talking about the behaviors of SIILTB entities only describable with philosophically nonsensical pseudotraits, calling into question things like utility functions.

The question is, even if we grant that there’s something meaningful being talked about here, what the hell IS it we’re talking about?

It’s not thermostats regulating temperature. It’s not actual paperclip maximization, since that’s clearly a notional element in a thought experiment. It’s not ordinary non-super salamanders experiencing what it is like to be a computer or vice versa. So what are we talking about?

What are we actually talking about that is neither in the category of ordinary technological risks, nor in the category of biological risks posed by things we do attribute SIILTBness to, like diseases carried by salamanders (super or not)?

The whole problem here arises because hyperanthroporphic projection skips straight to the second necessary condition without even establishing the first.

And note that I am being extremely generous here, I am granting that computational-SIILTBness may exist at salamander level. I’d just like someone to establish something similar for, say, GPT-3 or Dalle2, before we get into extrapolations into the future via various pseudo-trait eigenvectors of improvement.

I don’t think the AI scaremongers can do this, because they do not actually understand condition 1 at all. They do not get what it means to establish SIILTBness or why it matters.

6. The Naive Case for Fear

I’ve made a complex argument (and to my mind, a very generous one, though it may not be seen that way) so far. So let me summarize what I suspect is going on unconsciously with sincere AI fearmongers.

SIILTBness is the key quality required to anchor pseudo-traits like “intelligence”

AI-scaremongers skip past SIILTBness to specific pseudotraits like intelligence

They then extrapolate these pseudotraits using specific component metric trends (“n moves ahead” or “trillions of hyperparameters”)

Then they reconstruct the pseudotraits from the extrapolated metrics (2x moves ahead is 2x more intelligent)

Then they extrapolate sideways to infer that other pseudo-traits like “sentience” are also evolving along parallel trend-lines

Then they implicitly re-introduce a SIILTBness for the composite extrapolated entity and give it a name: “AGI” or “Super-intelligence”

Then they proceed to make arguments about the behavior of these imagined entities in terms of imputed coherent super-traits

FINALLY, they argue that these behaviors somehow constitute a unique threat class that requires special treatment via rhyming-philosophical-nonsense problems like “alignment” that are ineffably different qualitatively from normal engineering alignment problems.

Even with the most generous versions of the fear argument I’m capable of entertaining, this is what I get to. To be sure, not all who hold AI fears of various sorts are the same, and there is a lot of variety in the various positions. But some version of this line of thought, traversed in sloppy, unconscious ways, is what leads to lurid fears like “a self-improving general intelligence will emerge and destroy us.”

This is a philosophically naive and incoherent case for fear that deserves to be taken about as seriously as fear of ghosts or hell.

Is there a more serious case to be made?

7. Well-Posed God AIs

The basic challenge here is that of constructing a “super” entity of some sort, via a reasonable extrapolation of today’s AI technologies, which deserves some sort of special treatment in terms of the risks it represents, and the responses it calls for.

To me, this boils down to, can we actually conceive of a well-posed God AI with one or more “super” traits that anchor to a meaningful SIILTBness?

The hard part of this problem is not cranking up the knobs to “super” but constructing a well-posed entity for which the pseudo-traits attached to the knobs will resolve into real traits. In other words, the challenge is not the construction of super-ness of any sort, but constructing a hyperanthropomorphic SIILTBness in the first place for the superness to be about.

If we can do this, we will have a well-posed God AI that might then exhibit behaviors it might be worth fearing in unique ways and “aligning” with.

Again, running with the best-case what-if (or what some would call worst-case), I think I know how to proceed. The key is two traits I’ve already talked about in the past that are associated with robots, but not disembodied AIs: situatedness, and embodiment.

These are not important for their own sake, but because they are partially sufficient conditions for constructing SIILTBness.

Situatedness means the AI is located in a particular position in space-time, and has a boundary separating it from the rest of the world.

Embodiment means it is made of matter within its boundary that it cares about it in a specific way, seeking to perhaps protect it, grow it, perpetuate it, and so on.

The AI might seek to expand both of course, and consume the world to form a planet-sized body, but it still has to start somewhere. With Cartman’s trapper-keeper for example.

Why? The reason is that situatedness and embodiment are two factors that allow SIILTBness to emerge in at least a rudimentary form. This is already obvious from the definitions.

Situatedness: There is something it is like to be a robot, if at the very least there is a mechanism by which you can inhabit its body envelope, such as through a VR headset attached to its sensors and actuators.

Embodiment: There is something it is like to be a robot, if at the very least the events affecting its material body can register in your brain (pain qualia for example).

Why are these important, and why don’t current AIs have them? The thing is, robots can have what I call worldlike experiences by virtue of being situated and embodied that an AI model like GPT-3 cannot. Being robot-like is not the only way for an AI to have a worldlike experience of being, but it is one way, so let’s start there.

8. Worldlike Experiences

Let’s return to the second condition for SIILTBness in Section 2: “It entails the existence of a coherent quality of experience associated with an entity”

What I mean by “coherent quality of experience” is that there is a coherence to the experience of the world associated with being an entity. There is something it is like to be X because there is something the world is like to experience as X.

This is a heavily loaded statement. In fact it is as loaded as possible, because I’m assuming the “world” exists!

This is not normally something you have to stipulate in philosophical discussions, but with AI, you have to. So let me stipulate that there exists a thing corresponding to the “world” (by which I mean the whole cosmos, not just Earth). It’s not all just maya in my head like Advaita philosophers and certain sorts of Buddhists believe for instance.

This is a powerful philosophical commitment: the world exists. If you object to that, we can have a different metaphysics argument.

Having stipulated that, how do we come to have a coherent experience of it? If you think about it, this is in fact the question of SIILTBness turned around. There is something worldlike about the quality of your experience if and only if there is something it is like to be you. Your experiences are worldlike exactly to the extent there is something it is like to be you.

The brain of a child, William James famously asserted, is a “blooming, buzzing confusion” that eventually resolves first into an I and world via a partitioning of the experience field and construction of a boundary of self, and then acquires further refinements, such as a model of reality being distinguished from the sensory experience of it, with aspects such as object persistence and other-minds. Whether or not James’ particular guessed phenomenology of the ontogeny of self is valid, it seems safe to assume that somewhere between fertilized egg and a five-year-old mind, SIILTBness emerges from some other condition of being. This is no more unreasonable to assume than what we assumed in our Salamander of Theseus thought experiment.

By the time there is something it is like to be a growing child, deserving of a he/she/they pronoun rather than an it, the child’s being already comprises the following elements, at least based on my own n=1 experience:

An I that can have pseudo-traits like “intention” contained within a physical boundary (situatedness) and possessed of materiality (embodied substance)

Intuitive commitment to the existence of a world, or not-I for the pseudo-traits to be about that is materially and spatio-temporally outside the boundary of the I

Perceptions sorted into I and not-I

Maps within I of both I and not-I

Further discernment of other minds in the not-I recognized as having SIILTBness

Note that these are not formal beliefs symbolically encoded and reasoned with. This is the phenomenological structure of the experience of being. Without these elements, the self decoheres, either into some version of the blooming, buzzing confusion James imputed to the infant brain, or something else that equally lacks a “coherent quality of experience.”

This is the content of SIILTBness. Every one of these structuring elements is extraordinarily hard to establish, and to disrupt once established.

Why does SIILTBness emerge in a human as it grows from infanthood? The answer, I think, is painfully simple: because we experience the actual world, which is in fact non-nonsensical to partition into I, and non-I, and proceed from there.

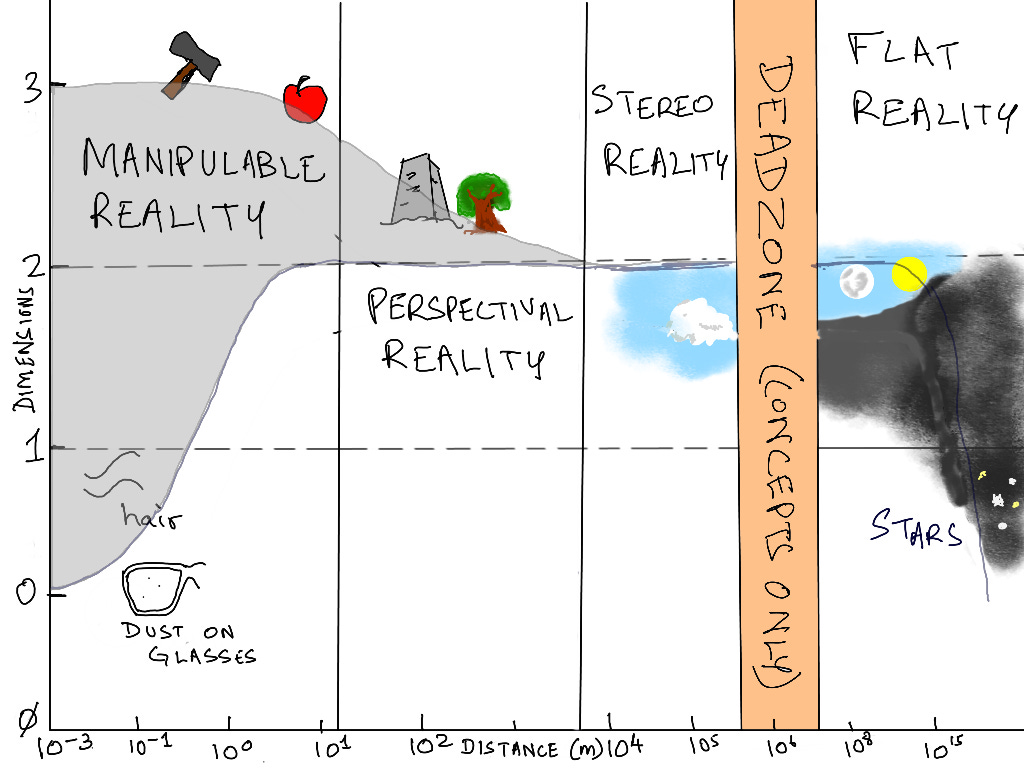

To take just visual-tactile experience alone, consider our own worldlike experience, as illustrated in this picture. Note how much structure there is! We experience the world as a 3d environment close-up (our hands and mouths are our only true 3d sensors). Further out, we experience it in read-only perspectival ways (call it 2.5d), where our own agent-like motion alters the sensory experience in predictable ways. Even further out, we have depth perception and a stereo-reality (2.1d): clouds, distant mountains, and atmospheric phenomena. Then there’s a beyond-the-horizon deadzone of things between 1000-10,000 km away that we must model indirectly with no direct sense-data (null-d). And finally, looking up, sensory reality starts existing again from the Moon on out, 10^8 - 10^15m away and beyond: an asymptotic 2d spatial reality.

Notably, this worldlike experience is neatly organized along a “distance” dimension in something we can construct as space, and the spatial dimensionality of our worldlike experience coherently goes from a range of 0-3, to 2-2.5 down to 2 as we look farther out. And our eyes, hands, and brains detect and exploit all this structure to build efficient models of the world in spatial terms, and in particular, one that allows a physical boundary of self to eventually emerge on the order of 10^1 m out, to partition the richest sensory experiences into an I.

The blooming-buzzing confusion of an infant’s world is some blurry version of this experience, and the important thing is that it can bootstrap into a worldlike experience, because there is, in fact, a world out there to be experienced!

And this is just a tiny aspect of the structuredness of the world that we experience in the process of coming into being as entities there is something it is like to be.

9. Internet Data is Not Worldlike

But now consider our best modern AIs, trained on a very different sort of “blooming buzzing confusion” — the contents of the scraped internet.

Is that a worldlike experience? One that will naturally resolve into an I, not-I, sorted perceptions, map-territory distinctions, other minds to which SIILTBness can be imputed by the I, and so on?

The answer is clearly no. The sum of the scraped data of the internet isn’t about anything, the way an infant’s visual field is about the world. So anything trained on the text and images comprising the internet cannot bootstrap a worldlike experience. So conservatively, there is nothing it is like to be GPT-3 or Dalle2, because there is nothing the training data is about. To talk of a superintelligent version of GPT is like Douglas Adams’ joke in Hitchhiker’s Guide to the Galaxy about the hoovooloo— a superintelligent shade of the color blue. A trait or pseudo-trait like “intelligence” requires as a referent an entity that there is something it is like to be, and a color is not such an entity.4

Could you argue that the processes at work in a deep-learning framework are sufficiently close to the brain that you could argue the AI is still constructing an entirely fictional, dream-like surreal worldlike experience? One containing an unusual number of cat-like visual artifacts, and lacking a “distance” dimension, but coherent in other ways, a maya-world for itself? Perhaps it is evolving what Bruce Sterling has been calling “alt intelligence”?

Certainly. But to the extent the internet is not about the actual world in any coherent way, but just a random junk-heap of recorded sensations from it, and textual strings produced about it by entities (us) that it hasn’t yet modeled, a modern AI model cannot have a worldlike experience by overfitting The junk-heap. At best, it can have a surreal, dream-like experience. One that at best, encodes an experience of time (embodied by the order of training data presented to it), but no space, distance, relative arrangement, body envelope, materiality, physics, or anything else that might contribute to SIILTBness.

Is this enough for it to pose a special kind of threat? Possibly. Psychotic humans, or ones tortured with weird, incoherent sensory streams might form fantastical models of reality and act in unpredictable and dangerous ways as a result. But this is closer to a psychotic homeless person on the street attacking you with a brick than a “super” anything. It is a “sub” SIILTBness, not “super” because the sensory experiences that drive the training do not admit an efficient and elegant fit to a worldlike experience that could fuel “super” agent-like behaviors of any sort.

Again, keep in mind that I’m trying to find the most generous versions of the arguments I can, ones I can try and make myself believe in. There’s plenty of ways even this fragile idea could fall apart, and I suspect, does.

10. Worldlike Trainings

Can we approach artificial SIILTBness another way, by making the training experiences of the AI more coherent? By putting the AI brain into a robot body for starters? Or constraining or structuring its training experiences some other way?

This is much more promising. And to the extent SIILTBness of any sort has been achieved already, it is with AIs that have an element of situatedness and embodiment to them that structures their experiences. For example, I am willing to allow that there may be something it is like to be AlphaGoZero, as it went from playing randomized games against itself to defeating human players. There’s at least an experience of time there, and a very limited and impoverished worldlike experience that admits partitioning of reality in ways that are both efficient and useful, and might induce aspects that at least resemble I and not-I.

So if you actually wanted to construct an AI capable of coherently evolving along trajectories that get to hyper-SIILTBness, and perhaps exhibiting super-traits of any sort, that’s where you’d start: by feeding it vast amounts of sensorily structured training data that admits a worldlike experience rather than a dreamlike one, induces an I for which SIILTBNess is at least a coherent unknown, cranking up various knobs to Super 11, and finally, waiting for it to turn superevil,5 or at least superindifferent to us.

Then finally, there might be an “alignment” problem that is potentially coherent and distinct enough from ordinary engineering risk management to deserve special attention. I assess the probability of getting there to be about the same as discovering that ghosts are real.

To me, almost all of this seems painfully obvious. And it seems to me the only explanation for why it’s not the default public view is the sensationalist appeal of lurid hyperanthropomorphic projections. Which makes for fun stories, clickbait reporting, and entertaining movies.

It is just fun to think about AIs going “sentient” and “super” and “general” and turning evil and stomping on us all to maximize paperclips and so on. But this philosophy of AI is about as serious as literalist religion, and deserves to be taken about as seriously. Which in my case is “not at all.”

AI is too interesting to sacrifice at the altar of confused hyperanthropomorphism. We need to get beyond it, and imagine a much wider canvas of possibilities for where AI could go, with or without SIILTBness, and with or without super-ness of any sort.

We have a long way to go, plenty of reasons to go there, and no good reasons to fear either the journey or destination in any exotic new ways. Our existing fears are more than good enough to cover all eventualities.

In his famous article, What is it Like to Be a Bat? The key subtlety is that he was talking about what it is like for a bat to be a bat, not what it is like for you to be a bat.

Such an ability would imply a teleporation capability of sorts though.

I have a whole other rant about optimization theory that I’ll save for another day.

These 2 sentences were added after the email newsletter went out. A color is in fact not even an entity or trait of one, but a quality of perception by an entity with SIILTBness, which makes the joke even better.

Let’s make sure to include red LEDs wired to the First Law circuit so we know when it does.

Interesting, I largely agree with your conclusions (if not exactly the arguments that got you there).

- current AI models for the most part have no real agency and so no SIILTBness

- the imaginary hyperintelligent AGIs have agency, but their imagined SIILTBness is a projection of a limited set of human capacities – they have relentless goal-following, but not no empathy (the inborn tendency of humans to partly share each others goals). Basically the model for AGIs are monsters like the xenomorphs from Alien, which in turn are representations of relentless amoral capitalism.

- Alignment research is basically saying, hey, we created these monsters, can we now turn them into housepets so they don't kill us, which is pretty hilarious.

- Part of the fascination with monsters is their partial humanness, or partial SIILTBness in your terms. They are all sort of, "what it is to be an agent with some human traits, magnified and stripped of their compensating tendencies".

- just because AGIs are obviously monsters (that is, projections of humanities fears of aspects of itself) doesn't mean they can't be real dangers as well.

Crossposted from LessWrong for another user who doesn't have a paid subscription to your substack:

My impression from Section 10 is that you think that, if future researchers train embodied AIs with robot bodies, then we CAN wind up with powerful AIs that can do the kinds of things that humans can do, like understand what’s going on, creatively solve problems, take initiative, get stuff done, make plans, pivot when the plans fail, invent new technology, etc. Is that correct?

If so, do you think that (A) nobody will ever make AI that way, (B) this type of AI definitely won’t want to crush humanity, (C) this type of AI definitely wouldn’t be able to crush humanity even if it wanted to? (It can be more than one of the above. Or something else?)

(I disagree with all three, briefly because, respectively, (A) “never” is a very long time, (B) we haven’t solved The Alignment Problem, and (C) we will eventually be able to make AIs that can run essentially the same algorithms as run adult John von Neumann’s brain, but 100× faster, and with the ability to instantly self-replicate, self-replicate, and there can eventually be billions of different AIs of this sort with different skills and experiences, etc. etc.)"

See here for other responses: https://www.lesswrong.com/posts/Pd6LcQ7zA2yHj3zW3/beyond-hyperanthropomorphism