Oozy Intelligence in Slow Time

The genius of AI lies in a literally infinite capacity for taking pains

This essay is part of the Mediocre Computing series

I just connected some very interesting dots between my Fear of Oozification thesis (Sept 29), Matt Webb’s excellent reframing of AI as intelligence too cheap to meter (Oct 6) published a week later, and my old Superhistory, not Superintelligence essay (May 2021). The connection is simply this: oozification in AI is the effect of intelligence being too cheap to meter, and existing in high-detail slow time as a result. I’m very pleased with how this set of ideas is starting to come together.

Matt’s headline is self-explanatory, but you should click-through and read anyway and return here, since he makes some subtle points.

In my post, I defined oozification as follows:

Oozification is the process of recursively replacing systems based on numerous larger building blocks, governed by many rules, with ones based on fewer, smaller building blocks, governed by fewer rules, thereby increasing the number of evolutionary possibilities and lowering the number of evolutionary certainties.

and applied it to AI like so:

Out of all the possibilities in the vast universe of Lovecraftian horror, why shoggoths in particular?…The answer is: They are the ooziest beings in the Lovecraftian universe…Shoggoths are large masses of barely living protoplasm, … [that] gained a glimmer of “general” intelligence and sentience, enough to threaten their creators.

…

Now there’s an obvious cosmetic mapping to the basic nature of modern machine learning. At the hardware level, GPUs and similar types of accelerator hardware comprise large grids of very primitive computing elements that are rather like a mass of protoplasm. The matrix multiplications that flow through them are a very primitive kind of elan vital. Together, they constitute the primordial ooze that is modern machine learning. There are almost no higher-level conceptual abstractions in the picture. Everything emerges from this ooze, including any necessary (but not necessarily familiar) structure.

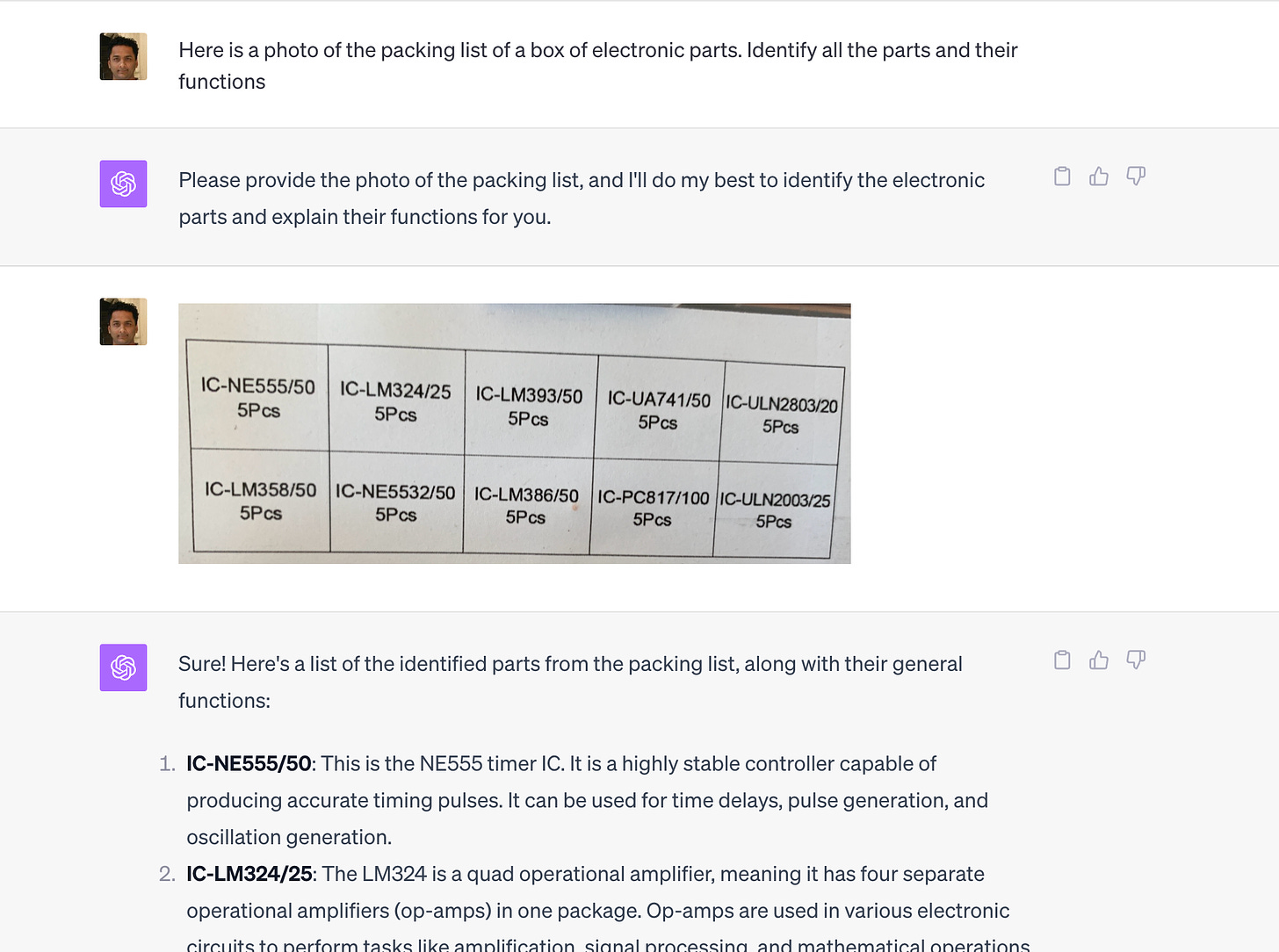

Let me connect the dots here with a particularly clear example. A use case I discovered for GPT4 that has immediately shot to the top of my list of ways to use my $20/mo Jarvis: inventory management for cheap crap.

In playing with electronics, one of the dull, grinder-y things you have to do is look up part numbers and pin-out diagrams for chips, and data sheets for components. Cheap stuff you buy from China, in oozy assortment boxes, is often minimally documented, with just a list of part numbers and counts. A few boxes of ooze like this have been sitting around in my workshop for a couple of years without me doing anything with them, because I’ve been too lazy to do the boring work of looking up part numbers and pin-out diagrams that is necessary and unavoidable for getting to the fun part of playing with them.

I bought one such box a year ago because the assortment included one part I recognized and wanted to learn to use (the classic 555 timer chip), but it came with a bunch of other stuff I’ve been meaning to get around to.

Here is what ChatGPT can do with this problem:

The thing about this problem is that it doesn’t require high intelligence. This is not like beating Magnus Carlsen at chess. It requires cheap intelligence on a lot of raw information. But without intelligence too cheap to meter, I probably wouldn’t systematically solve this problem at all. I wouldn’t generate and save this kind of neat documentation at all and get to the fun part.

Now to be sure, it’s fairly obvious if you do the math on GPUs that ChatGPT is priced outrageously low, and it probably costs an order of magnitude more to provision than OpenAI charges (which is a good reason to pay and essentially get free subsidy money btw). Quite possibly, this query cost more in compute time than the box of cheap parts itself.

But even taking that into account, this is still too cheap to meter. Paying a human to do this would be prohibitive at hobby scale at least.

ChatGPT can do more than just interpret and document photos of packing lists. The browse-with-Bing feature can go schlep around and find and link the data sheets. It’s not yet great at this (the oozy swamp of electronics parts documentation is a bit too high-entropy for it), but it’s already good enough that it triaged away almost all the grinding. I just have to do a few of the messier ones. I just cut and paste the responses into my Roam notebook.

It can even give you basic circuits to try, to familiarize yourself with a chip or part.

The effect of having this too-cheap-to-meter intelligence on tap has been immediate: I’ve actually started playing with these parts I’ve had lying around.

Here’s the connection to oozification: When intelligence becomes too cheap to meter, it becomes cheap enough to inject into tiniest bits of oozified techology. The vast universe of cheap electronics parts (my varied assortments of ICs are on average under $1) don’t justify spending a lot on unlocking their utility. If you spend $30 in intelligence to learn the basics of how to use a $0.50 part, it’s not sustainable. But if you can spend $0.25 for the task, it is. The task is not just below the minimum-wage threshold for human intelligence. It’s below a patience threshold for human attention.

Matt’s reframe of AI aligns neatly with my own reframe in Superhistory, not Superintelligence, and extends it nicely. In that post, I argued that AI should be considered intelligence that’s simply learned for a very long time in human years, due to the way training works. So in terms of training time, something like GPT is thousands of years old, and anyone using an AI in an integrated way, like Magnus Carlsen learning his chess skills in a feedback loop with chess AIs, is effectively hundreds of years old.

“Too cheap to meter” extends that frame to inference. The reason tasks like looking up part numbers and data sheets is hard is that time is expensive for us humans. But AI in inference mode has all the time in the world compared to you and me. It can do an hour’s worth of browsing around and collecting links in minutes, and what’s more, tirelessly browse and collect for hours. This has big implications.

High-Detail Bandwidth is Slow Time

If you flip it around and look at the pace of real life from the point of view of an AI, it’s clear that time, defined in terms of the rate at which detail (or equivalently, incompressible information) is processed, passes more slowly for AIs.

To see this, ask yourself: How often are you in the right sort of headspace to do boring, tedious, bureaucratic grinding of the sort the parts inventory task requires, and for what tasks are you actually willing to do it at all? In other words, what is your bandwidth for doing high-detail tasks, and what does it cost you?

For me, the kind of headspace/intelligence needed to do the parts list documentation task is similar to say the kind needed to do taxes or pay bills. I don’t have to be at my smartest. I do have to be at my most patient and relaxed. And I typically don’t devote that kind of detail-oriented, grinding intelligence to anything less critical than taxes and bills. I grudge the time spent on such tasks even when the upside is in the thousands of dollars worth of savings, and the downside of not doing them is jail. That’s my threshold.

So my answer to the question is: I have a few hours worth of the right kind of mental energy per month, and I use it on things where the stakes are really high. The revealed preferences there explain why I procrastinate on looking up parts and downloading data sheets. What’s at stake is losing $0.50 in unused inventory at worst. Clearly I don’t care enough.

But an AI can do this kind of work relentlessly. It’s like me being willing to do mundane taxes and bills 24/7 without losing my will to live.

From the human point of view, the key point here is not that AIs are working at a blazing pace compared to us (though they are). It’s that they’re getting through a vastly higher volume of tedious detail than would be ever worth our time. The “blazing speed” kind of computing has been around for decades and we’re no longer impressed by it.

The slow time here is not the bullet-dodging Matrix variety, but of the sort in the other slow-time movie trope: of characters like Marvel’s Pietro Maximoff, or DC’s Flash, who effectively freeze time and then go around doing a lot of ordinary things in an extraordinarily short amount of time, like moving objects around, moving people out of the way of harm, straightening people’s ties or putting them in silly poses. They also do things like dodging bullets, but they’re so fast that that’s not the impressive part; the impressive part is the amount of raw schlepping they do in a fraction of a second. I can’t dodge bullets, but I can do everything Maximoff or Flash do in certain scenes. It would just take me far too long for it to be either useful, or worth the cost.

Another way to look at it. Ooze is ooze to humans because we lack the kind of cheap attention required to engage mindfully with its detailed structure at the rate it evolves in real time. Our speed of patience is too low.

The Speed of Patience

But AIs can devote that kind of attention. They do have the necessary speed of patience. Ooze to humans is not ooze to AIs. The have the speed of patience to process it.

The idea of a “speed of patience” is weird. We normally think of patience as the ability to not get frustrated in our dealings with phenomena that unfold slowly. But this is a simplistic view. Patience is a combination of two things: a tolerance limit for a tempo of events, and a tolerance limit for a level of detail. Reality is usually a lot busier at finer levels of detail. Still water at human levels of optical resolution is buzzing with microbial activity under the microscope. Drying paint is uneventful at the level at which it proverbially bores humans, but is buzzing with activity at an atomic scale.

So a better definition of patience is one that admits an associated notion of speed. Patience is the slowest tempo of activity you can tolerate at the finest level of detail you can handle indefinitely. That’s your speed of patience. Since humans can’t handle too much detail, our speed limit is set by very coarse phenomenology. Many people whom we admire for their patience aren’t better at waiting for things to happen. They’re better at operating at a level of detail where things happen faster. When you say “I don’t have patience for those details” you’re making a point about level of detail, not time. You’re saying that certain phenomena look like ooze to you because they’re below the level of detail you can comfortably process, at the required rate.

So if you’re feeling impatient because things are going too slow, you could zoom in to a level of detail where they might be fast enough, but then you likely wouldn’t be able to tolerate the detail without help and preparation. If such zooming in is too costly, you can’t stay at that level steadily enough. Staring at a lake is free. Staring at drying paint is free. Microscopes are costly and strain your eyes.

In fact, one uncommon characterization of intelligence is in terms of patience and detail-level. Thomas Carlyle: Genius is an infinite capacity for taking pains. We talk of painstaking work. The word pain is revealing. It’s costly for humans to deploy attention outside our default band of detail. Our brain, like our eyes, has a “focal length,” a resting attentional gaze, and a range of accommodation. Going beyond that range causes brain strain and is costly. We are not general intelligences to the extent that cost landscape is uneven. There are places our attention cannot go at all because it’s too costly. This looks like ooze to us.

This is, by the way, one reason I really dislike the idea of “general” intelligence, and have argued elsewhere that it is fatally flawed. Human intelligence is not general (even though the computing machinery underlying it, as with AIs, is “universal” in a specific sense). Our intelligence is of a specifically adapted kind. One way to get at this non-generality is to consider the cost of paying attention at various tempos and levels of detail. It is costlier for us to pay attention at fine levels of detail, and very high or very low tempos. Our ideal zone of attention is chasing and hunting down an animal about our own size, or picking fruit from trees. That’s our patience bandwidth.

The idea of intelligence as an exceptional capacity for processing detail derives from this specific view of intelligence. But with AI, which can operate at arbitrary levels of abstraction at roughly the same cost (once it’s in the form of text or images, the AI doesn’t care whether it’s looking through a microscope or telescope, or considering lofty philosophy questions or the meaning of a part number), it’s better to think of it as having a higher speed of patience. What you and I rarely have the patience for, AI can do all the time.

So if ooze is not ooze to AI, what is it?

It’s rich, absorbing detail coupled with all the time in the world to pay attention to it at whatever rate it evolves. If, as John Salvatier observed, reality has a surprising amount of detail, AI has a surprising amount of patience in dealing with it. And the ability to deploy the patience fast enough to keep up with ooze.

False Peak and Steady Schleps

The speed of patience, ie the tempo of events at the level of detail (with associated rate of evolution) you can steadily pay attention to, is arguably the most important measure of an intelligence, but we tend to use the exact opposite kind of measure.

We humans tend to evaluate intelligence in peak-performance mode. How smart were you during that crucial chess championship game? How smart were you while staring at that difficult theorem just before you saw how to prove it? How smart were you when you took that IQ test. When you took the IIT entrance test?

There is a certain narcissistic anthropocentrism to this temporal highlighting of apparent peak performance moments. We pay attention to those episodic displays of intelligence coming to fruition in a charismatic way because our identities are tied to the esteem and rewards they bring. We barely regard other regimes of cognition as intelligence at all. Like hours of patient grinding acquiring some sort of basic literacy. Or “10,000 hours” of deliberate practice repeating some boring set of actions until it’s in muscle memory and looks miraculous to those who have put in 10 hours. Or thousands of hours working with a tool and learning its nuances. We even downplay this effort because it takes away from the charisma and mystique of the peak moments.

We don’t think of an “infinite capacity for taking pains” as genius. We think of it as a good work ethic. We think of it as saintly conscientiousness. Monkish mindfulness.

We don’t think of the ability to grind on at a task indefinitely as intelligence, we think of it as stamina.

An analogy from mechanical engineering is helpful here. The strength of parts is often measured using what are known as S/N curves. Roughly, given a level of repetitive stressing, how many repetitions can a part endure before it fails from fatigue? Interestingly, there’s a level of stress below which the number of repetitions is essentially infinite. The curve slopes down initially (high stress breaks you after a few repetitions) but then flattens out above zero. You typically want to design a part so that the loading in use is in that flat part.

Indefinitely extended repetition behaviors, I increasingly suspect, constitute a better phenomenological ground for understanding what intelligence even is. It’s not the Aha! moments of insight and clarity that feel great and look like peak cognitive performance. It’s the steady, patient, continuously deployed level of attentiveness we bring to the endless stream of incompressible detail that is existence itself. “Aha!” moments occasionally put us ahead in a leveraged way as far as winning prizes is concerned, but if we had enough time, we wouldn’t even need them. We could just keep grinding.

Applying the S/N curve metaphor to intelligence, we’re used to thinking about it in a “one-rep max” way. What level of stress will break you after zero or one repetition? But that kind of intelligence is actually just a misleading fiction. Intelligence does not work that way. Yes, we do sometimes pull off heavy lift, non-repetitive thinking, but most of the time, the peaky appearance is about visible outcomes during spotlight windows, not effort. The effort is typically lower-stress/higher-repetition cognition that goes on invisibly for a long time before visible effects occur. It’s the 90% of the temporal iceberg of cognition. The work is done in the darkness. The prizes are won in the spotlighted windows of pseudo-peak performance.

In fact, taking darkness literally, I wouldn’t even restrict this account of intelligence to wakefulness. Human sleep is almost never considered part of “intelligent time” though a little poking around the neurophysiology of sleep shows that it obviously is. A lot of dull, grinding house-keeping and maintenance goes on during sleep. Long-term memories get properly processed and laid down, stuff gets repaired, garbage gets collected (in both the computing and literal senses). Problems on your mind when you go to bed yield to sleep and you wake up with the answers.

Maybe we’re in fact smartest when we’re asleep. Our speed of patience is highest there. So high that we don’t even trust ourselves to be conscious for it, with our narcissistic waking selves getting in the way.

Moravec Extended

All this is intelligence at work. Some of it isn’t even in the organ we identify with intelligence, the brain. Instead it’s in the spinal cord, endocrine system, gut, and so on. Even human intelligence is an oozy, whole-bodied, 24/7 somatic phenomenon. Identifying it with just the wakeful brain at its most alert (or worse, just the neocortex with its capacity for abstract reasoning and executive decision-making during those periods) is yet another anthropocentric-narcissism category error.

We’ve seen this kind of error before actually. Moravec’s paradox, an idea from the 80s, observes that what early AI researchers thought was easy (chess, theorem proving) turned out to be easy, and what they thought was easy (turning doorknobs, identifying objects, stuff even not-very-bright babies and smarter animals learn to do) was the actual hard stuff.

To take that thought to an extreme: Maybe it’s the stuff we do when asleep that’s hard — dreaming, memory integration, restoring emotional equilibrium. The stuff we do when awake is the easy stuff. Puts a whole new spin on “I can do that in my sleep” doesn’t it?

The extended Moravec’s paradox is this: We thought generating inspired moments of peak intelligence was hard, and steady, patient intelligence easy. Turns out, it’s the other way around. Those inspired moments are not even real. They’re mostly narrative illusions created by periods of strong emotional self-regulation and courage, not intelligence. It’s the steady, patient grinding that actually creates the possibility of those moments, and is expensive to do.

Oozification extends Moravec’s paradox in the patience space of tempo and detail level. The peak “Aha!” moments of insight are easy. Steady, attentive grinding focused on the surprising amount of detail in reality is hard. Things you can “do in your sleep” are really hard.

Momentary enlightenment is easy. Chopping wood and carrying water is hard. Building a circuit and enjoying the momentary thrill of watching it operate correctly when you turn on the power is easy. Looking up part numbers and datasheets, and patiently troubleshooting the circuit when it fails to work is hard. It’s rarely a deep insight-generating bug or error. It’s usually a detail you carelessly got wrong or forgot.

Fun aside: If you do a lot of detailed, painstaking work during the day, guess what you will dream of at night? And how much work those dreams do? It’s no accident I’ve been having dreams about electronic parts recently. They’re not as vivid as Tetris dreams, but pretty vivid.

And it’s all hard not in a “this needs higher IQ” way or even in a “this needs gritty courage” way; it’s hard in a “this needs more patience and time” way. It’s hard in a “this needs intelligence too cheap to meter” way. It’s hard in an “infinite capacity for taking pains” way.

You’re Still Not an Artisan

This line of thought also has me changing my mind about an idea I came up with 10 years ago, in a blog post that was quite popular at the time, You Are Not an Artisan.

The main point in that post, a critique of “artisan” pretensions, was that humans want to do sexy work, and leave schleppy (“dull, dirty, dangerous”) work to machines, but that the comparative advantage of humans is in fact dealing with schleppy but intelligence-demanding work that doesn’t lend itself to automation of the repetitive, algorithmic-scaling kind we associate with assembly lines. I used a pair of early modern archetypes — the bard and the chimney sweep — to lay out the distinction, and argued, essentially, that you should abandon the narcissistic self-regard of bards and choose to be a chimney sweep in the innards of technology. That sexy work was in fact easier for computers to do, and therefore we should let them do it, and find meaning in schleppy work.

That whole post reads interestingly not-even-wrong now, but I think it was groping at precisely the understanding of our relationship with computing technology that’s now much more obvious in the context of modern AI. The sexy-schleppy distinction, and the bard/chimney-sweep pair, are simply too coarse for thinking about oozy intelligence. Sexy/schleppy are entangled at the smallest scales of existence. We are all made of bard/chimney-sweep ooze. So are our computers.

The headline claim still holds, but in a different way. You’re still not an artisan. But not because your pretensions to bard-hood are fake posturing (though they are). You’re not an artisan in the sense that the “you” in question is not the charismatic moments of aesthetic self-presentation, exhibiting a certain kind of high-status excellence in public. It is your whole 24/7, full-bodied oozy-intelligent self. A self that’s doing a fractal mix of sexy and schleppy things all the time, at a deep level of detail that you’re mostly not even aware of. Probably much of it while the artisan “you” is in fact asleep.

As an aside: my own traversal of this line of thought itself illustrates this whole idea. I’ve been schlepping down this bunny trail for at least a decade, going by that 2013 post. Probably longer. It’s all just patient accumulation and processing of a lot of details, a lot of it probably in my sleep. But if a particular charismatic idea like “oozification” catches on, it looks like an inspired thought that came from nowhere.

Putting it Together

The differences and similarities between human and machine intelligence are much more subtle than we think, and the terms of reference for interesting and useful comparisons need to be equally subtle. Setting aside anthropocentric conceits and lurid superintelligence narratives is just the first step. Constructing a better view of intelligence is a long, patient schlep that’s just getting started. And there are a lot of oozy detail to be worked out.

We have to think in terms of the cost of computation in time, at extremely fine scales. We have to talk about the scope of application of intelligence in terms of the lowest level of oozy detail it can handle, at the rate of real-time evolution.

I think the right mental model for thinking about AI is finally starting to come together. I feel like I have 80% of the pieces I need, even if the picture isn’t completely put together:

Oozification of technological substrates

Intelligence too-cheap-to-meter

Superhistorical time for training

Slow/ooze time for inference

The speed of patience

The cost of attention and non-generality of intelligence

“AIs have a surprising amount of patience.”

Steady attention floor of reality detail level

Extended Moravec’s paradox — sleep wiser than wakefulness

Fractally intertwingled sexy/schleppy thinking going all the way down

Chop wood/carry water thinking over “enlightenment” thinking

Somatic/whole-bodied intelligence and oozy transhumanism

These pieces add up to a very unfamiliar understanding of intelligence, but one that feels right and useful. The model is not hard to grasp, but is identity-threatening. As with every Copernican revolution that humanity has lived through, the key is letting go of anthropocentric conceits and identity-anchored categories of analysis. But that’s merely necessary, and nowhere near sufficient.

Frames of “superintelligence,” “generality,” “alignment” and God-like machines are attractive because they are highly identity-affirming. Even as they offer shallow, lurid Halloween-grade scares, they actually flatter our anthropocentric conceits.

This picture isn’t yet fully put together. It feels like a rough first cut, so I’ll be schlepping on this idea more. But in the meantime, I want to point to a couple of good posts by others who’ve picked up on the oozification line of thought.

Simon de la Rouviere had a great riff about narrative oozification in fiction. This is a really promising way to think about narratives I think, and an angle I definitely missed.

Michael Dean has a similar riff about oozification in music, specifically the creation of AI-generated Beatles songs.

If you know of any others, let me know. Several people said they liked the oozification article, and I’m hoping more of them are taking the idea in interesting directions.

These analyses increase my confidence that we’re all on to something here with this line of thought. Let’s see where it leads.

Loved this--it inspired an AI use case for me today. My team sent me a bunch of differently formatted text docs with time spend in meetings. Instead of doing what I would normally do, normalizing in Excel, I just dump the mess into Claude and started asking it questions. It worked beautifully.

Another thought which occurs to me - inspired by this essay and also the idea that "big data is when it's cheaper to store it than to figure out what to do with it" - a bit like Quantum Mechanics, there's something fundamentally inhuman about the way AI works. You basically can't mental model it with intuitive understanding or folk physics - you need to learn a new set of conceptual tools.